A simple example for testing a neural network implementation is trying to

learn the digits 0..9 from a seven-segment display representation. Figure 19.8

shows the arrangement of the segments and the numerical input and training

output for the neural network, which could be read from a data file. Note that

there are ten output neurons, one for each digit, 0..9. This will be much easier

to learn than e.g. a four-digit binary encoded output (0000 to 1001).

Figure 19.9 shows the decrease of total error values by applying the backpropagation

procedure on the complete input data set for some 700 iterations.

Eventually the goal of an error value below 0.1 is reached and the algorithm

terminates. The weights stored in the neural net are now ready to take on previously

unseen real data. In this example the trained network could e.g. be tested

against 7-segment inputs with a single defective segment (always on or always

off).

Neural Controller

Control of mobile robots produces tangible actions from sensor inputs. A controller for a robot receives input from its sensors, processes the data using relevantlogic, and sends appropriate signals to the actuators. For most large tasks, the ideal mapping from input to action is not clearly specified nor readily apparent. Such tasks require a control program that must be carefully designed and tested in the robot’s operational environment. The creation of these control programs is an ongoing concern in robotics as the range of viable application domains expands, increasing the complexity of tasks expected of autonomous robots.

A number of questions need to be answered before the feed-forward ANN in Figure can be implemented. Among them are: How can the success of the network be measured? The robot should perform a collision-free left-wall following.

How can the training be performed?

In simulation or on the real robot.

What is the desired motor output for each situation?

The motor function that drives the robot close to the wall on the left-hand

side and avoids collisions.

Neural networks have been successfully used to mediate directly between

sensors and actuators to perform certain tasks. Past research has focused on

using neural net controllers to learn individual behaviors. Vershure developed

a working set of behaviors by employing a neural net controller to drive a set

of motors from collision detection, range finding, and target detection sensors

[Vershure et al. 1995]. The on-line learning rule of the neural net was designed

to emulate the action of Pavlovian classical conditioning. The resulting controller

associated actions beneficial to task performance with positive feedback.

Adaptive logic networks (ALNs), a variation of NNs that only use boolean

operations for computation, were successfully employed in simulation by

Kube et al. to perform simple cooperative group behaviors [Kube, Zhang,

Wang 1993]. The advantage of the ALN representation is that it is easily mappable

directly to hardware once the controller has reached a suitable working

state.

In Chapter 22 an implementation of a neural controller is described that is

used as an arbitrator or selector of a number of behaviors. Instead of applying a

learning method like backpropagation shown in Section 19.3, a genetic algorithm

is used to evolve a neural network that satisfies the requirements.

Friday, April 18, 2008

Neural Network Example

NEURAL NETWORKS

The artificial neural network (ANN), often simply called neural network(NN), is a processing model loosely derived from biological neurons [Gurney 2002]. Neural networks are often used for classification problems or decision making problems that do not have a simple or straightforward algorithmic solution. The beauty of a neural network is its ability to learn an input to output mapping from a set of training cases without explicit programming, and then being able to generalize this mapping to cases not seen previously. There is a large research community as well as numerous industrial users

working on neural network principles and applications [Rumelhart, McClelland 1986], [Zaknich 2003]. In this chapter, we only briefly touch on this subject and concentrate on the topics relevant to mobile robots.

Neural Network Principles:

A neural network is constructed from a number of individual units called neurons that are linked with each other via connections. Each individual neuron has a number of inputs, a processing node, and a single output, while each connection from one neuron to another is associated with a weight. Processing in a neural network takes place in parallel for all neurons. Each neuron constantly (in an endless loop) evaluates (reads) its inputs, calculates its local activation value according to a formula shown below, and produces (writes) an output value.

The activation function of a neuron a(I, W) is the weighted sum of its inputs, i.e. each input is multiplied by the associated weight and all these terms are added. The neuron’s output is determined by the output function o(I, W),for which numerous different models exist.In the simplest case, just thresholding is used for the output function. For our purposes, however, we use the non-linear “sigmoid” output function

defined in Figure 19.1 and shown in Figure 19.2, which has superior characteristics for learning (see Section 19.3). This sigmoid function approximates the Heaviside step function, with parameter controlling the slope of the graph

(usually set to 1).

Feed-Forward Networks:

A neural net is constructed from a number of interconnected neurons, which are usually arranged in layers. The outputs of one layer of neurons are connected to the inputs of the following layer. The first layer of neurons is called the “input layer”, since its inputs are connected to external data, for example sensors to the outside world. The last layer of neurons is called the “output layer”, accordingly, since its outputs are the result of the total neural network and are made available to the outside. These could be connected, for example, to robot actuators or external decision units. All neuron layers between the input layer and the output layer are called “hidden layers”, since their actions cannot be observed directly from the outside.

If all connections go from the outputs of one layer to the input of the next layer, and there are no connections within the same layer or connections from a later layer back to an earlier layer, then this type of network is called a “feedforward network”. Feed-forward networks (Figure 19.3) are used for the simplest types of ANNs and differ significantly from feedback networks, which we will not look further into here.

For most practical applications, a single hidden layer is sufficient, so the typical NN for our purposes has exactly three layers:

• Input layer (for example input from robot sensors)

• Hidden layer (connected to input and output layer)

• Output layer (for example output to robot actuators)

Perceptron Incidentally, the first feed-forward network proposed by Rosenblatt had only two layers, one input layer and one output layer [Rosenblatt 1962]. However, these so-called “Perceptrons” were severely limited in their computational power because of this restriction, as was soon after discovered by [Minsky,Papert 1969]. Unfortunately, this publication almost brought neural network research to a halt for several years, although the principal restriction applies only to two-layer networks, not for networks with three layers or more. In the standard three-layer network, the input layer is usually simplified in the way that the input values are directly taken as neuron activation. No activation function is called for input neurons.

Friday, April 11, 2008

Working Of Remote Control System

Wireless control has always seemed to fascinate people, and Questor’s remote control system is the heart of his appeal. While the technical aspects of remote control may be

a little hard for the novice to grasp, Questor’s remote control system is rather simple in construction. Before I go into detail on how the system is comprised, a brief explanation of remote control is in order.

A remote control system consists of three basic components.The first is the transmitter or “encoder.” Moving controls on thetransmitter causes it to send or encode signals to the second part of the remote control system, the receiver, or decoder. Thereceiver gets the signals from the transmitter and then decodesthem. Depending on what signal the receiver decoded, it willactivate a servo, the third part of the system. Servos are the

mechanical part of a remote control system.

A wheel or sometimes bar on the servo will turn in proportion with the movement

of the transmitter’s control. This movement can then be used to directly control the function of a robot, or in Questor’s case to trip switches that control his movements.

Questor’s remote control system is a standard off-the-shelf type like that pictured in Fig. 4-1. Notice the three main parts of the system. The robot requires a system with a minimum of two channels.

A two-channel system has two servos; each of the servos is used to control one of the robot’s motorized wheels. The system used in my version of Questor has three

channels; the third channel is used to trip two switches that can turn other items on the robot on or off.

The switches that the servos trip are called leaf switches (Fig below). A leaf switch is a very small on/off switch that is triggered by depressing a small metal strip or “leaf” on the switch. By using four leaf switches, it is possible to recreate the function of the DPDT switches used in the temporary control box.

A total of eight switches is needed to duplicate the function of the DPDT switches used to control the robot’s motorized wheels. One servo is then used to trip four switches in

such a way to drive the wheel either forward or reverse. You use the control sticks on the remote control transmitter in the same way as you flipped the DPDT switches on the temporary control box; up is forward, center is off, and down is reverse.

If you chose a remote control system with more than two channels, you can use the other servos to trip leaf switches for turning other devices on or off, or control motors (forward,

stop, and reverse) within the robot. The third servo of my remote control system is used to turn a horn on and off.

You need only one leaf switch per function if that function is to be turned only on or off. Figure above shows how the leaf switches are positioned and triggered for either on/off or forward/reverse control. By now you’re probably wondering

where all this fits inside of Questor. The remote control system (servos and receiver), leaf switches, and other components are mounted on a motherboard that is then installed inside Questor’s framework.

Sunday, April 6, 2008

Camera Sensor Data

We have to distinguish between grayscale and color cameras, although, as we will see, there is only a minor difference between the two. The simplest available sensor chips provide a grayscale image of 120 lines by 160 columns with 1 byte per pixel (for example VLSI Vision VV5301 in grayscale or VV6301 in color). A value of zero represents a black pixel, a value of 255 is a white pixel, everything in between is a shade of gray. Figure below illustrates such an image. The camera transmits the image data in row-major order, usually after a certain frame-start sequence.

Creating a color camera sensor chip from a grayscale camera sensor chip is

very simple. All it needs is a layer of paint over the pixel mask. The standard

technique for pixels arranged in a grid is the Bayer pattern (Figure 2.17). Pixels

in odd rows (1, 3, 5, etc.) are colored alternately in green and red, while

pixels in even rows (2, 4, 6, etc.) are colored alternately in blue and green.

With this colored filter over the pixel array, each pixel only records the intensity of a certain color component. For example, a pixel with a red filter will

only record the red intensity at its position. At first glance, this requires 4 bytes

per color pixel: green and red from one line, and blue and green (again) from the line below. This would result effectively in a 6080 color image with an additional, redundant green byte per pixel. However, there is one thing that is easily overlooked. The four components red, green1, blue, and green2 are not sampled at the same position. For example, the blue sensor pixel is below and to the right of the red pixel. So by treating the four components as one pixel, we have already applied some sort of filtering and lost information.

A technique called “demosaicing” can be used to restore the image in full 120160 resolution and in full color. This technique basically recalculates the three color component values (R, G, B) for each pixel position, for example by averaging the four closest component neighbors of the same color. Figure below shows the three times four pixels used for demosaicing the red, green, and blue components of the pixel at position [3,2] (assuming the image starts in the top left corner with [0,0]).

Averaging, however, is only the simplest method of image value restoration

and does not produce the best results. A number of articles have researched better algorithms for demosaicing [Kimmel 1999], [Muresan, Parks 2002].

Camera Sensor Hardware

In recent years we have experienced a shift in camera sensor technology. The previously dominant CCD (charge coupled device) sensor chips are now being overtaken by the cheaper to produce CMOS (complementary metal oxide semiconductor) sensor chips.

The brightness sensitivity range for CMOS sensors is typically larger than that of CCD sensors by several orders of magnitude.For interfacing to an embedded system, however, this does not make a difference. Most sensors provide several different interfacing protocols that can be selected via software.

On the one hand, this allows a more versatile hardware design, but on the other hand sensors become as complex as another microcontroller system and therefore software design becomes quite involved. Typical hardware interfaces for camera sensors are 16bit parallel, 8bit parallel, 4bit parallel, or serial.

In addition, a number of control signals have to be provided from the controller. Only a few sensors buffer the image data and allow arbitrarily slow reading from the controller via handshaking. This is an ideal solution for slower controllers. However, the standard camera chip provides its own clock signal and sends the full image data as a stream with some frame-start signal. This means the controller CPU has to be fast enough to keep up with the data stream.

The parameters that can be set in software vary between sensor chips. Most common are the setting of frame rate, image start in (x,y), image size in (x,y), brightness, contrast, color intensity, and auto-brightness. The simplest camera interface to a CPU is shown in Figure below.

The camera clock is linked to a CPU interrupt, while the parallel camera data output is connected directly to the data bus. Every single image byte from the camera will cause an interrupt at the CPU, which will then enable the camera output and read one image data byte from the data bus Every interrupt creates considerable overhead, since system registers have to be saved and restored on the stack.Starting and returning from an interrupt takes about 10 times the execution time of a normal command, depending on the microcontroller used. Therefore, creating one interrupt per image byte is not the best possible solution. It would be better to buffer a number of bytes and then use an interrupt much less frequently to do a bulk data transfer of

image data.

Figure below shows this approach using a FIFO buffer for intermediate storing of image data. The advantage of a FIFO buffer is that it supports unsynchronized read and write in parallel.So while the camera is writing data to the FIFO buffer, the CPU can read data out, with the remaining buffer contents staying undisturbed.The camera output is linked to the FIFO input, with the camera’s pixel clock triggering the FIFO write line. From the CPU side, the FIFO data output is connected to the system’s data bus, with the chip select triggering the FIFO read line. The FIFO provides three additional status lines:

• Empty flag

• Full flag

• Half full flag

These digital outputs of the FIFO can be used to control the bulk reading of

data from the FIFO. Since there is a continuous data stream going into the

FIFO, the most important of these lines in our application is the half full flag,

which we connected to a CPU interrupt line. Whenever the FIFO is half full,

we initiate a bulk read operation of 50% of the FIFO’s contents. Assuming the

CPU responds quickly enough, the full flag should never be activated, since

this would indicate an imminent loss of image data.

Tuesday, April 1, 2008

MOTHERBOARD

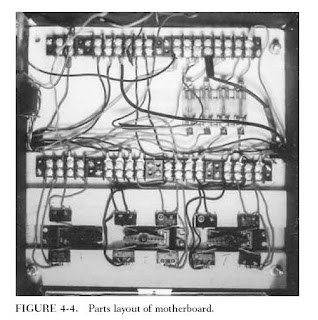

The motherboard is simply a 10-10-1/8-inch piece of plywood on which all of the components for the remote control system are mounted. The various components consist of the remote control system’s servos, receiver, and battery pack, along with ten leaf switches, four barrier strips, and a four-slot fuse holder. Figure 4-4 shows where each item is placed on the board.

The first items to be mounted are the servos. Cutouts will have to be made in the board to allow the servos to sit flush with the board. To do this, first place the servos evenly spaced on the motherboard and trace around their bases. Cut out the wood where traced and slip the servos in place. The servos’ body should have tabs sticking out along its top edge; these tabs prevent the servo from going all the way

through the board and this is where the servos are screwed to the board. Most remote control systems come with either plastic wheels and/or star levers that are screwed on the servo’s motor.

WIRING THE MOTHERBOARD:

Before you wire the motherboard, cut two notches on each side of the motherboard so the wires will not go past its edge. Figure 4-8 shows how to wire together the components on the motherboard. There are two main rows of barrier strips on the

motherboard; the first row is numbered.

These numbers correspond with numbers on the tabs of the leaf switches; simply wire the matching numbers together. In some cases more than one wire will go to one post on the barrier strip. Use the half of the barrier strip closest to the leaf switches. The color wire used is indicated on the leaf switch: R _ red, B _ black. The other row is where the motorized wheel and horn will be connected; they too use the matching number system.

The second row is divided into two parts called power grids. The first 8 post (which is one complete barrier strip) is called the positive grid and is where the positive lead of the battery is connected and where all the positive or red wires from Questor’s electronics will be connected. The second 8 post is for the negative or black wires and is called the negative power grid. All the posts on the same side of each grid must be wired together by one wire run from post to post.

Be sure not to run a wire between the positive and negative grids; this will cause a short circuit. Figure 4-8 shows where the wire runs. Later when other functions are wired, the instructions will say “wire to positive power grid and negative power grid.” You can then connect those wires to any open post on the grids. Figure 4-8 also shows four wires coming from the positive grid to the fuse holder. These wires are all positive and you should use red wires.

Two more red wires run from the opposite ends of two of the fuses directly to the post on the leaf switch barrier strips. This is where the switch gets the power to control two on/off functions in the robot. (The negative or black wire forms the function being controlled; in my robot a horn is wired directly to the positive power grid.) There are also two black wires running from the negative power grid to the leaf switch barrier strips at post 8 and 2.

These are also shown in figure below...

Wires to the leaf switches and fuse holder will have to be soldered. The wires that lead to the barrier strips should have hooks bent at their ends so they can wrap around the screws on the strip. After the board is wired, check it against Figure because errors here can affect the function of the rest of the robot. Also at this time, install four 20-amp fuses

in the fuse holder. These fuses help protect the robot’s components from short circuits and overloads. Once the board is wired and checked, the remote control receiver can

be mounted and the motherboard mounted in Questor’s framework.

COMPLETING THE MOTHERBOARD:

The remote control’s receiver and battery are mounted on the underside of the motherboard. Using four screw-on hooks, rubber bands and foam rubber, the receiver is held securely in place. Figure shows how to mount the receiver. The figure

is self-explanatory. The only thing to keep in mind is that the servos must be wired to the receiver, so don’t mount the receiver out of reach of the servo wires.

The order in which the servos are connected to the receiver is very important to the control of the robot. When both control sticks on the transmitter are pushed up, the

robot should move forward. If both sticks are pulled down, the robot should run in reverse. The center or neutral position is off and of course causes no movement of the robot. If you have a third channel (and servo) in your remote control system, it should react to the sideways movement of one of the control sticks on the transmitter.

Table 4-2 lists all of the control combinations used to operate Questor’s functions. It is not necessary to wire the motorized wheels to the motherboard. To check this simply make sure that when thesticks are pushed forward, the two servos controlling the motorized wheels turn as shown in Fig. 4-10. If you have a third servo a sideways movement of either stick should causethe servo to activate it.